Impactful AI product work isn't unlocked by merely picking the right model or adding a chatbot to your interface. It goes deeper into how AI is intentionally configured to participate in scoping, development, testing, and release.

Right now, most teams are experimenting with AI, but far fewer are structuring it. And without structure, AI is more likely to compound chaos rather than value.

When structured intentionally, it provides tremendous leverage to your dev team. It can accelerate scoping, reduce boilerplate, improve testing coverage, and speed up iteration. But consistently unlocking that value requires more than access to a model's most expensive pricing tier. It requires intentional context design.

At strideUX, we've been working alongside [Plastr](https://plastr.io) to help design, develop, and test a more reliable way to structure AI inside the product lifecycle instead of layering it on top. And this model is starting to level up teams and projects everywhere not because it adds greater intelligence to AI, but better context delivered at the right time.

The context bloat problem

Before we get into how this new tool improves AI product development, let's first establish why Plastr even dedicated the time to creating it in the first place. It's all about the big downside that most orgs encounter when they start trying out spec driven development.

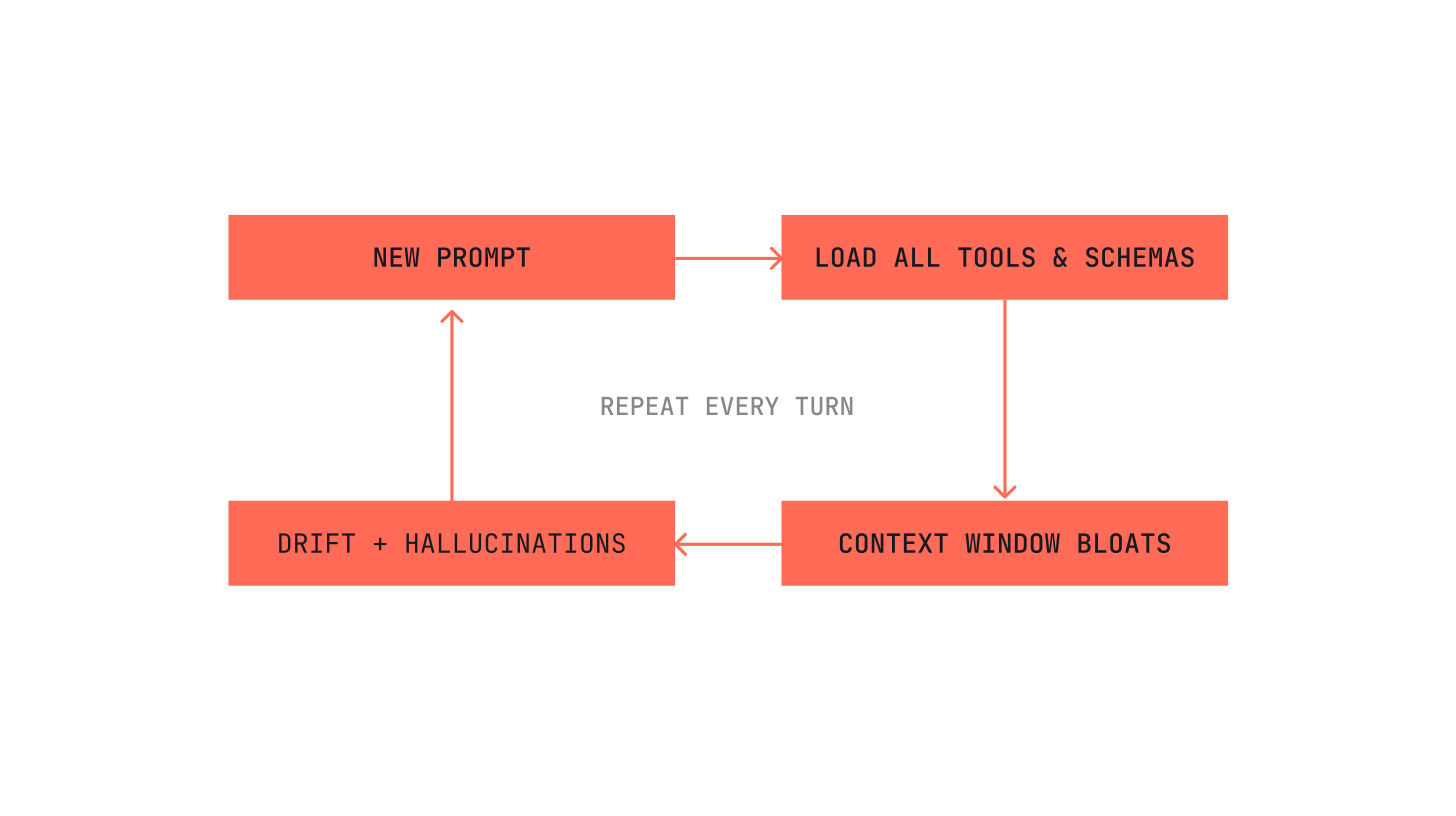

The dominant pattern for most teams today is straightforward.

Dump specs, documentation, tool schemas, instructions, and integrations into a giant prompt and hope the model holds it all together. Or, take a slightly gentler approach of adding initial context, then gradually append more information as the session grows over hours and days.

All of this is well-intentioned, but most find it eventually breaks down sooner or later. The more context that's loaded in, the more likely the model is to ignore parts of it, the more inconsistent outputs become, and the chance of hallucinations or drift increases.

We'd all like to believe that AI gets more accurate with more information. Yet in reality, it gets increasingly overwhelmed. More tokens don't equal more reliability. They increase entropy.

This isn't an AI capability issue. It's an AI strategy issue.

The MCP trap

Since late 2024, Model Context Protocol (MCP) has become the standard way to connect AI tools to external systems.

The core idea makes sense on paper: give the model access to everything it might need so it can collaborate across systems, especially in complicated product workflows like spec-driven development.

The problem that many teams experience isn't in the concept, though. It's in the implementation.

With MCP-heavy AI-assisted development, every tool description loads into the context window with every prompt. The same goes for schemas and integrations, which also reload continuously. So as the conversation grows, the context grows, and every session carries more and more weight because the protocol persistently reloads every data point, even when most of it isn't needed.

Ironically, the more information MCP accumulates to "remember everything," the harder it becomes for the model to reliably remember and use any of it.

It's like pulling everything out of your pantry and refrigerator and putting it on the table just to make a peanut butter and jelly sandwich. Technically, you have access to everything. Practically, you've created a mess, and might not even be able to find the extra crunchy JIF.

AI doesn't need everything everywhere all at once. It only needs what's relevant.

Context, delivered intentionally

The real question isn't whether AI should have context, but how context should be delivered.

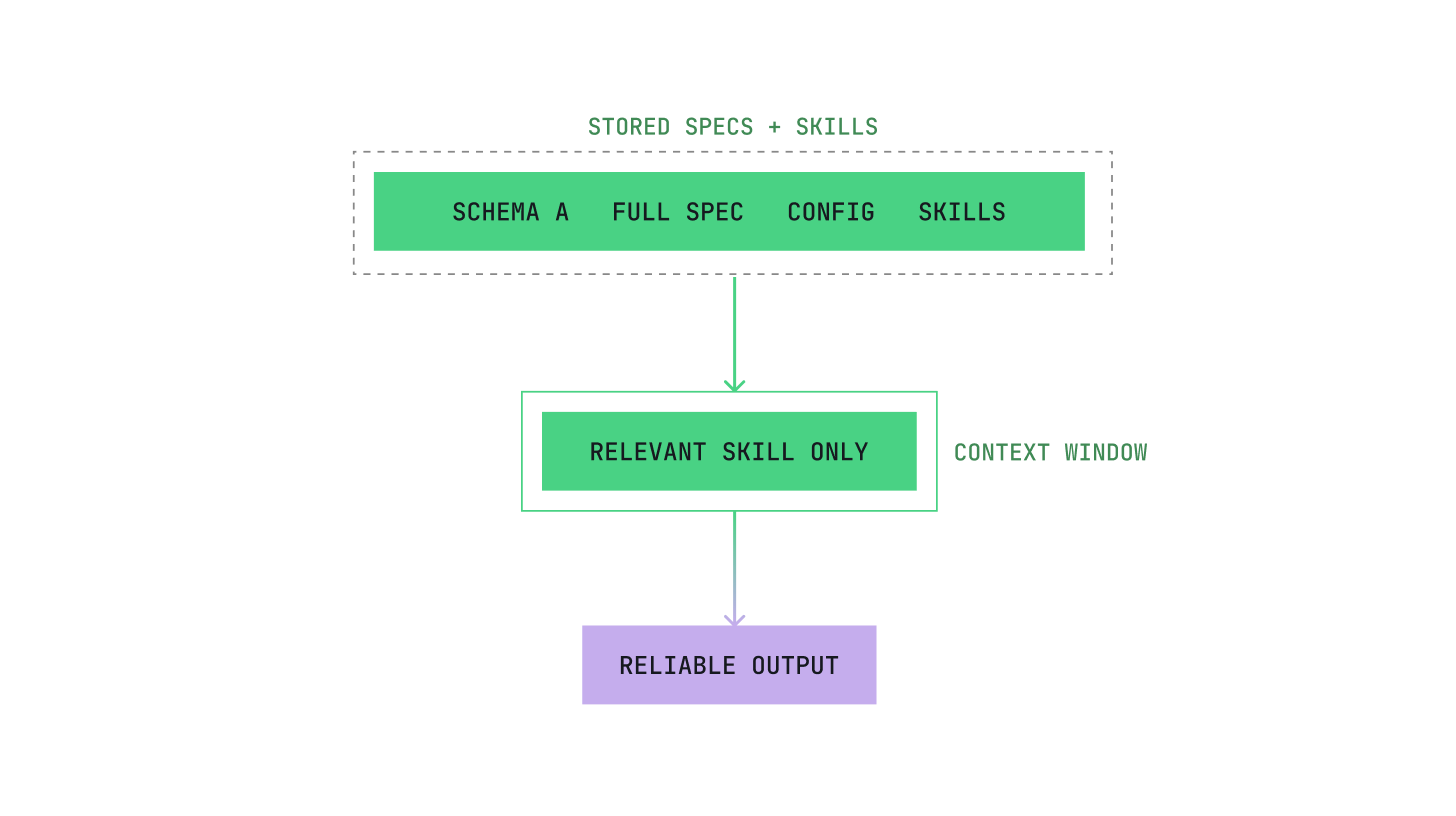

Instead of bulk-loading everything into a single window, what if AI retrieved only what it needed for the task at hand?

Pull out the ingredients required for the meal. Leave the rest in the pantry.

This approach treats context as infrastructure, not as a dump site. It prioritizes retrieval over accumulation. Reliability comes from focus, not volume.

This is where AI product strategy becomes real.

If AI is helping build your product, then your strategy must define:

- how context is structured

- how it's retrieved

- how it's enforced

- how it evolves over time

Without that structure, every session starts from scratch. Standards drift. Patterns shift. Outputs vary wildly between developers. And your team eventually abandons it.

With it, however, AI maintains accuracy and starts delivering real value.

Why FlyDocs

Plastr realized that the protocol most teams were adopting wasn't designed for long-term AI collaboration inside real engineering workflows. So they reached out to our team at StrideUX to help build a different approach.

FlyDocs doesn't rely on a traditional Linear MCP. Instead, it uses structured skills that activate at specific lifecycle moments, keeping context focused and execution deterministic.

This eliminates bloat and keeps execution accurate. It moves teams away from "AI slop" and toward AI-driven development that feels fast, structured, and accountable.

Here's how FlyDocs works:

Still start with specs

Structure still begins with specifications.

Share whatever you have: product specs, coding standards, architecture decisions, or build process rules. Spec-driven development remains foundational. AI performs better when it works from written project rules instead of guessing from training data.

The difference is how those specs are delivered to the model.

Instead of dumping them wholesale into every prompt, they become structured components inside a broader system.

Let skills take it from there

This is where skills come in.

A skill is a reusable, organized bundle of instructions and optional scripts that tells your AI exactly how to perform a specific task. For example, "always structure a component this way" or "run this script to create a ticket."

In FlyDocs, skills organize your data by topic and executable instruction. This means less model improvisation and more executable deterministic scripts based on the specs you've shared.

Unlike MCP tools, skills don't load everything into context every turn. They retrieve only what supports the task in front of you. This simple shift improves reliability at scale.

Unlocking real AI product strategy

Cultivating impactful AI product strategy takes work. It means treating context as infrastructure, defining retrieval intentionality, enforcing guardrails automatically, and measuring reliability.

When AI participates in development with structure, its output becomes more consistent. Standards are followed. Decisions compound instead of resetting every session.

At both Plastr and strideUX, FlyDocs is one implementation of this philosophy. It's how we help teams structure AI across their full product lifecycle, from scoping to release, without overwhelming the model or the team. In fact, we even use it ourselves with all of our clients.

The goal of all this isn't to replace human judgment. It's to free up internal experts to focus on strategy, creativity, and decision-making while AI handles a meaningful portion of structured execution.

This is what AI product strategy looks like when implemented with excellence.

And it doesn't have to be broken.